Though Google provides a pretrained BERT checkpoint for English, you may often need Will represent a disconnect from the first sentence. The assumption is that the random sentence Subsequent sentence in the original document, while in the other 50% a random sentenceįrom the corpus is chosen as the second sentence. During training, 50% of the inputs are a pair in which the second sentence is the Predict if the second sentence in the pair is the subsequent sentence in the originalĭocument. In the BERT training process, the model receives pairs of sentences as input and learns to

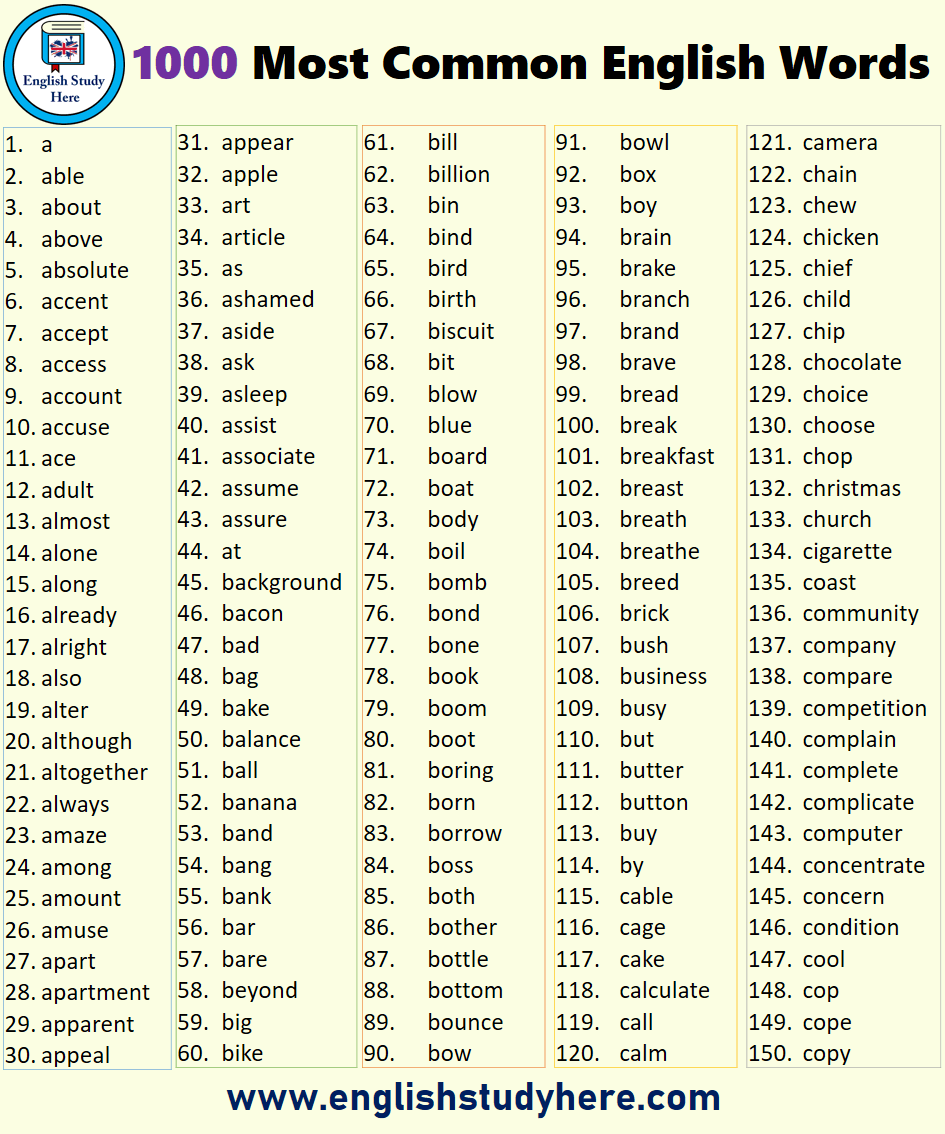

Words, based on the context provided by the other, non-masked, words in the sequence. The model then attempts to predict the original value of the masked To overcome thisĬhallenge, BERT uses two training strategies: Masked Language Modeling (MLM)īefore feeding word sequences into BERT, 15% of the words in each sequence are replaced "The child came home from _"),Ī directional approach which inherently limits context learning. Many models predict the next word in a sequence (e.g. When training language models, a challenge is defining a prediction goal. This characteristicĪllows the model to learn the context of a word based on all of its surroundings It would be more accurate to say that it’s non-directional. Therefore it is considered bidirectional, though (left-to-right or right-to-left), the Transformer encoder reads the entire The detailed workings of Transformer are described inĪs opposed to directional models, which read the text input sequentially Since BERT’s goal is to generate a language model, only theĮncoder mechanism is necessary. Separate mechanisms - an encoder that reads the text input and a decoder that producesĪ prediction for the task. In its vanilla form, Transformer includes two Have shown that a similar technique can be useful in many natural language tasks.īERT makes use of Transformer, an attention mechanism that learns contextual relationsīetween words (or subwords) in a text. Network as the basis of a new specific-purpose model. Instance ImageNet classification, and then performing fine-tuning - using the trained neural Transfer learning - pretraining a neural network model on a known task/dataset, for In the field of computer vision, researchers have repeatedly shown the value of Introduction BERT (Bidirectional Encoder Representations from Transformers)

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed